2026 model brief

ChatGPT vs Claude vs Gemini

A deeper 2026 comparison of GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, coding strength, pricing, context windows, tool surfaces, and what each ecosystem is actually best at.

ChatGPT vs Claude vs Gemini: which should you pick right now?

There is still no honest universal winner. The meaningful split is platform shape, not fan sentiment. OpenAI has the broadest all-around product surface. Anthropic makes the cleanest coding-and-agents argument. Google has the strongest ecosystem leverage when search, Workspace, Vertex, or multimodal routing already matter to your stack.

The official docs now make this easier to see. OpenAI has moved the frontier story to GPT-5.5: stronger agentic coding, computer use, research, knowledge work, and longer-horizon tool use than GPT-5.4. Anthropic has Claude Opus 4.7 as its generally available premium ceiling for coding, agent workflows, memory-heavy work, and high-resolution vision. Google is positioning Gemini 3.1 Pro around harder reasoning, multimodal workflows, search grounding, and Google-native enterprise deployment.

So the real question is not which model sounds smartest in a demo. It is which ecosystem makes your actual workflow cheaper, faster, and more reliable once you account for tool surface, context windows, review processes, latency, procurement, and lock-in.

Current product snapshot (2026)

These are the current product facts that matter most when you are choosing an ecosystem rather than arguing about one benchmark chart.

| Ecosystem | Current read | Useful numbers | Best fit | Main watchout |

|---|---|---|---|---|

| ChatGPT / OpenAI | Broadest all-around platform | GPT-5.5 is priced at $5 in / $30 out with a 1M-token API context window; GPT-5.5 Pro is much more expensive at $30 in / $180 out; OpenAI still has the broadest tool and product surface | Teams that want one vendor across chat, API, search, code, and execution | Higher flagship pricing than GPT-5.4 and a very broad surface area that can encourage deeper lock-in |

| Claude / Anthropic | Strongest coding and agent-work case | Sonnet 4.6 remains the practical workhorse; Opus 4.7 is $5 in / $25 out, uses model ID claude-opus-4-7, supports 1M context, 128K output, adaptive effort, tools, files, PDFs, memory, and high-resolution images | Codebase work, long-horizon tasks, document-heavy reasoning, and higher-trust agent execution | Premium price, narrower surrounding product surface, and a real migration checklist because sampling parameters, extended thinking, token counting, and thinking display behavior changed |

| Gemini / Google | Best ecosystem leverage play | Gemini 3.1 Pro Preview is $2 in / $12 out below 200K input tokens, $4 in / $18 out above 200K, and has a 1M / 64K context profile in the Gemini docs | Google-heavy stacks, search-grounded workflows, multimodal apps, and cost-sensitive routing | Naming, preview status, and product-surface differences still create buyer confusion |

The benchmark trap is still the easiest way to buy the wrong model

The current official product pages are more informative than most benchmark threads because they expose what the vendors are actually selling. OpenAI is selling an ecosystem with built-in tools, search, and execution. Anthropic is selling reliability on coding, agentic work, computer use, memory, and higher-fidelity vision. Google is selling model capability plus grounding, multimodality, and cloud leverage.

That is why raw benchmark talk is often a distraction. The most expensive failure mode is not picking the model that scored two points lower on a leaderboard. It is picking the ecosystem that forces awkward routing, weak governance, higher review burden, or a tool stack your team will not really use.

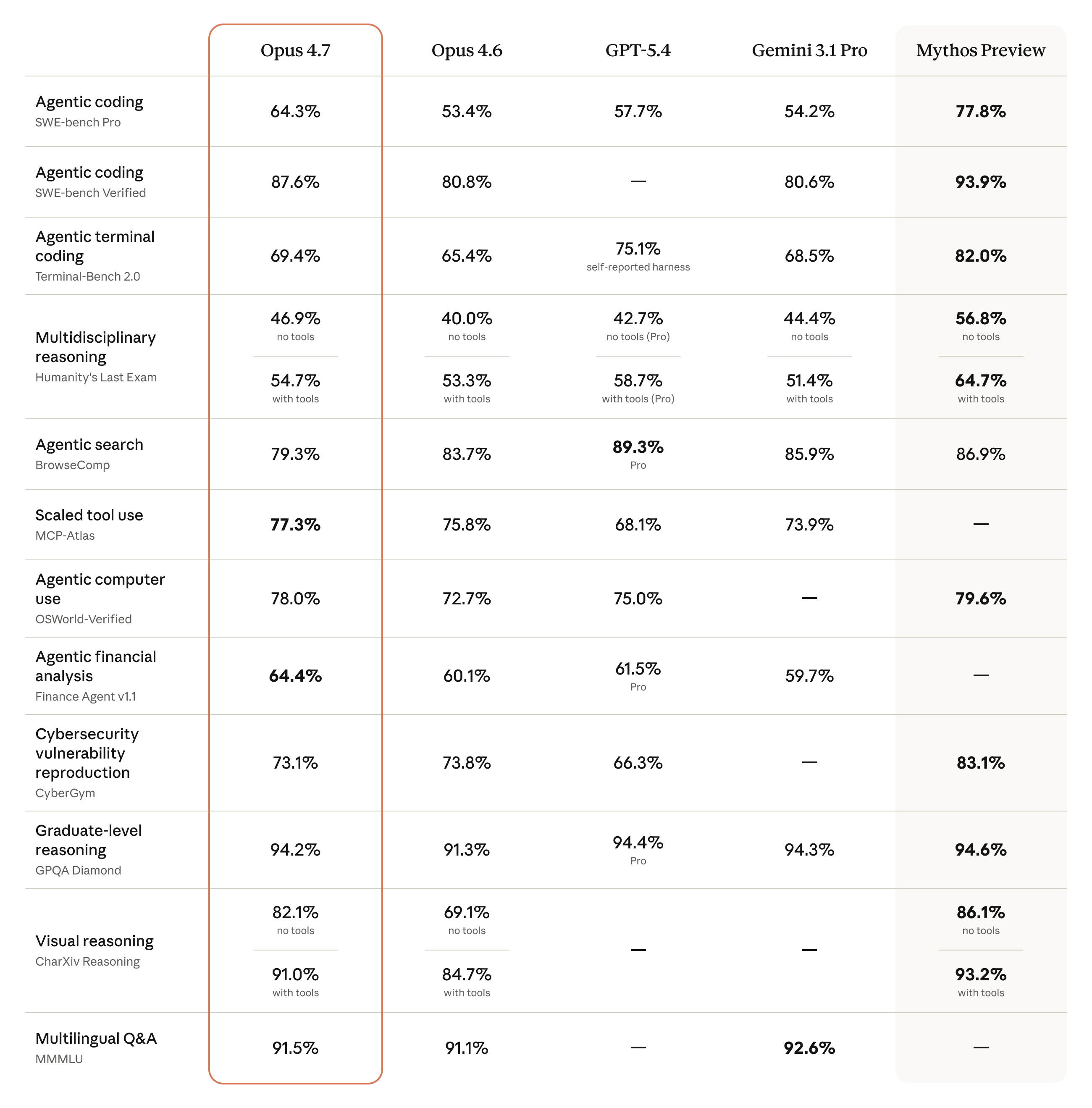

Current benchmark snapshot: GPT-5.5 changed the OpenAI read, Opus 4.7 still changed Claude

The useful move is not to crown one model from one vendor table. Read the benchmarks by workload: code repair, terminal work, knowledge work, browser/search tasks, computer use, tool orchestration, and long-context reliability each tell a different story.

| Benchmark | Current numbers | What it means |

|---|---|---|

| SWE-bench Pro | Opus 4.7: 64.3%; GPT-5.5: 58.6%; GPT-5.4: 57.7%; Gemini 3.1 Pro in OpenAI's comparison table: 54.2% | Claude Opus 4.7 remains the cleaner published coding leader on this benchmark, but GPT-5.5 narrows OpenAI's gap while improving the surrounding agent platform. |

| SWE-bench Verified | Opus 4.7: 87.6%; Opus 4.6: 80.8%; Gemini 3.1 Pro in Anthropic's comparison table: 80.6%; Mythos Preview: 93.9% | The most practical message is not that every team should use the most expensive model. It is that Anthropic's top public Opus tier now has a much stronger verified-code story than Opus 4.6. |

| Terminal-Bench 2.0 | GPT-5.5: 82.7%; GPT-5.4: 75.1%; Opus 4.7: 69.4%; Gemini 3.1 Pro in OpenAI's comparison table: 68.5% | OpenAI now has the clearest terminal-work signal. For CLI-heavy agents, repo automation, and command-line workflows, GPT-5.5 deserves first evaluation. |

| GDPval-AA knowledge work | GPT-5.5: 84.9%; GPT-5.4: 83.0%; Opus 4.7: 80.3%; Gemini 3.1 Pro in OpenAI's comparison table: 67.3% | OpenAI has the stronger current all-around knowledge-work signal in its public table, especially when the work crosses documents, spreadsheets, research, and tool use. |

| OfficeQA Pro document reasoning | OpenAI table: GPT-5.5 54.1%, GPT-5.4 53.2%, Opus 4.7 43.6%, Gemini 3.1 Pro 18.1%; Anthropic separately reports a major Opus 4.7 document-reasoning lift over Opus 4.6 | Do not overread one OfficeQA framing. Dense document workflows need direct testing with your actual PDFs, spreadsheets, screenshots, and review rubrics. |

| OSWorld-Verified computer use | GPT-5.5: 78.7%; Opus 4.7: 78.0%; GPT-5.4: 75.0% | OpenAI and Anthropic are both credible for computer-use agents. GPT-5.5 has the slight public edge here, while Claude's high-resolution vision still matters for dense screenshots. |

| BrowseComp and search-heavy work | GPT-5.5 Pro: 90.1%; GPT-5.4 Pro: 89.3%; Gemini 3.1 Pro: 85.9%; GPT-5.5: 84.4%; Opus 4.7: 79.3% | Search-heavy and browsing tasks still favor OpenAI and Gemini more than Anthropic, so research assistants should keep routing options open. |

| MCP Atlas and tool use | Opus 4.7: 79.1%; Gemini 3.1 Pro: 78.2%; GPT-5.5: 75.3%; GPT-5.4: 70.6% | The tool-use picture is not one-sided. Claude remains very strong on protocol-style tool orchestration even where GPT-5.5 wins terminal and general computer-use benchmarks. |

| GPQA Diamond | GPT-5.5: 93.6%; GPT-5.4 Pro: 94.4%; Gemini 3.1 Pro: 94.3%; Opus 4.7: 94.2% | At this tier, graduate-level reasoning is tightly clustered. Vendor fit, tools, pricing, governance, and latency usually matter more than tenths of a point. |

What matters more than benchmark fan wars

The current official sources all point to the same deeper truth: choosing a model family is really choosing an operating model.

- Consumer popularity does not equal enterprise fit. ChatGPT still dominates mainstream surface area, but Claude and Gemini win for narrower, very real reasons.

- Tooling matters as much as raw model quality. OpenAI's built-in tools, Anthropic's coding-and-computer-use emphasis, and Google's grounding stack change the value of the base model.

- Context length is only useful if the model remains reliable over long tasks and the surrounding workflow can exploit that window.

- A slightly cheaper token price can still produce a more expensive system if the vendor fit, review process, or data path is wrong.

The practical choice by workflow

This is usually more useful than debating who won a single benchmark.

| Workflow | Best first look | Why |

|---|---|---|

| General-purpose AI product or internal AI platform | OpenAI | Best fit when you want the broadest mix of chat, API, tools, search, and execution in one vendor. |

| Serious codebase work and agent-style engineering tasks | Anthropic | Claude Opus 4.7 gives Anthropic a stronger premium code-agent ceiling, while Sonnet 4.6 remains the more practical daily workhorse. |

| Search-grounded enterprise assistant or Google-native stack | Gemini | Google's grounding, multimodality, and Vertex routing economics become strategically meaningful very quickly. |

| Cost-sensitive multimodal workloads at scale | Gemini or OpenAI mini-tier products | Google's pricing is attractive on the flagship side, while OpenAI's mini and nano offerings give useful fallback options. |

| High-trust long-context reasoning over documents | Claude or Gemini | Claude now has the stronger document-reasoning benchmark story with Opus 4.7, while Gemini still adds Google-side grounding, search, and infrastructure leverage. |

How real teams often route across the three ecosystems

Many buyers do not settle on one vendor forever. They choose a default and keep a second path for specialized work.

| Team pattern | Default choice | Secondary choice | Why |

|---|---|---|---|

| Broad internal AI rollout | OpenAI | Claude | OpenAI covers the most general ground; Claude picks up harder code or long-context reasoning tasks |

| Engineering-heavy product team | Claude | OpenAI | Claude often wins on coding depth while OpenAI remains useful for broader product surfaces and integrations |

| Google-native enterprise | Gemini | OpenAI or Claude | Gemini fits the surrounding stack while a second model may cover specialized coding or generalist tasks |

| Cost-sensitive multimodal application | Gemini | OpenAI mini-tier models | The economics can be strong on Google while OpenAI provides fallback coverage and broader tools |

| High-trust document and code workflows | Claude | Gemini | Claude makes the cleaner premium reasoning case; Gemini adds grounding and Google-side data leverage |

Where ChatGPT is strongest

OpenAI is still the safest generalist default if you want breadth, and GPT-5.5 materially strengthens that default. It posts a large Terminal-Bench 2.0 lead, improves OSWorld, BrowseComp, GDPval, and long-context scores over GPT-5.4, and is explicitly aimed at messy, multi-part work across code, research, spreadsheets, documents, and software interfaces.

That breadth is what makes OpenAI hard to displace. Even when another vendor is stronger on one narrow task, OpenAI often wins on total product completeness. If you need one ecosystem that can support chat experiences, APIs, coding workflows, search-grounded tasks, computer use, and tool execution, it still makes the most coherent all-around case.

Where Claude is strongest

Anthropic currently makes the sharpest case for coding-heavy and agentic work. Sonnet 4.6 is still the practical workhorse for teams balancing quality, speed, and cost. Opus 4.7 is now the premium generally available Claude ceiling: stronger than Opus 4.6 across many coding, knowledge-work, document, vision, memory, and computer-use benchmarks at the same $5 / $25 per million-token price.

The Opus 4.7 migration details matter for real products. It uses the claude-opus-4-7 model ID, keeps 1M context and 128K output, moves advanced reasoning toward adaptive thinking and effort levels, drops non-default sampling parameters, changes token counting, omits visible thinking by default unless configured, and adds high-resolution image support up to 2576 pixels on the long edge. That makes it stronger for serious agents, but it also means teams should re-benchmark prompts, cost, latency, screenshots, and token budgets before swapping it into production.

Where Gemini is strongest

Gemini is the most strategic choice when Google's surrounding assets matter. Gemini 3.1 Pro is rolling out across the Gemini API, Vertex AI, Gemini app, NotebookLM, Gemini CLI, Google Antigravity, and Android Studio, and Google's broader docs tie the model family directly to Search grounding, File Search, URL context, code execution, structured outputs, and a 1M-token context profile. The preview status is important for production buyers because naming, tooling, and availability can vary by surface.

That matters because the Gemini choice is rarely just about model quality. It is about distribution through Google products, easier attachment to Google infrastructure, and a more natural fit for teams already living in Workspace, BigQuery, GCP, or search-heavy product flows.

The current lock-in and procurement question

A lot of model comparisons still act as if the decision ends with price or benchmark scores. In real organizations, the harder question is procurement and integration gravity. OpenAI pulls you toward the richest all-purpose tool surface. Anthropic pulls you toward a narrower but often more trusted coding-and-agent stack with a stronger premium Opus tier than it had before. Google pulls you toward deeper cloud and search integration.

That means the best choice is often the one whose surrounding product assumptions already match your team. The wrong ecosystem can force awkward wrappers, duplicated infrastructure, or workflow friction that overwhelms the headline token price.

What each vendor is really optimizing for

OpenAI is optimizing for platform breadth. Anthropic is optimizing for trusted depth on coding and agent execution. Google is optimizing for ecosystem leverage. Once you see that, the market feels less confusing. Each vendor is trying to become the default in a different layer of the AI stack.

That is also why the best answer can differ for chat, API use, internal tooling, customer-facing products, and enterprise deployment. A model family can be excellent in one lane and still be the wrong default for another.

Bottom line

Pick OpenAI if you want the broadest, most mature generalist platform and are willing to pay for that surface area.

Pick Anthropic if your hardest problem is codebase work, multi-step agent execution, document reasoning, computer-use agents, or high-trust reasoning over large contexts.

Pick Gemini if Google infrastructure, search grounding, multimodality, and routing economics are strategic advantages rather than nice-to-haves.

Frequently asked questions

These are the practical questions buyers usually mean when they search ChatGPT vs Claude vs Gemini.

Which AI model is best for coding in 2026?

Claude Opus 4.7 has the strongest public SWE-bench Pro signal among generally available Claude/OpenAI/Gemini comparison points, while GPT-5.5 has the strongest current Terminal-Bench 2.0 signal. For product teams, that means Claude deserves first look for deep codebase reasoning and review, while GPT-5.5 deserves first look for terminal-heavy agents and broad developer workflows.

Which model is best for long context and document-heavy work?

Claude, Gemini, and GPT-5.5 all make serious long-context cases, but in different ways. Claude Opus 4.7 is compelling for document-heavy reasoning, high-resolution screenshots, and long-running agent work. Gemini 3.1 Pro gives Google-native teams a 1M-token context profile with search grounding and file tools. GPT-5.5 improves OpenAI's long-context and computer-use story while keeping the broadest platform surface.

Should teams choose ChatGPT, Claude, or Gemini based on price alone?

Usually no. Token pricing matters, but integration cost, tool availability, review workflows, and reliability on your real tasks matter more. A cheaper model can still produce a more expensive system if it fits poorly.

Which ecosystem is least risky for general adoption?

OpenAI is still the safest all-around default if you need one broad AI platform for many use cases. Anthropic and Google become stronger choices when you have clearer workflow specialization.

Should teams standardize on one model vendor or route across several?

Many teams should do both: pick one default ecosystem to simplify governance and vendor management, then keep a second path for specialized workloads such as coding, multimodal grounding, or price-sensitive batch work. The right answer depends on how often those specialized workloads matter.

Visual source gallery

These are the current sources behind this page as of 2026. The added depth here comes from leaning on official pricing, benchmark, and model docs first, then adding market-context sources where they help explain the product landscape.

Best single source for OpenAI pricing and the current OpenAI tool surface, including web search, containers, batch, flex, and priority processing.

Primary current source for OpenAI's GPT-5.5 benchmark table, pricing, agentic coding, computer-use, knowledge-work, and safety positioning.

The clearest source on Anthropic's default workhorse model: coding, computer use, long-context reasoning, and 1M beta context.

Primary source for the new Opus 4.7 benchmark table, pricing continuity, high-resolution vision, memory, agentic coding, and current premium Claude positioning.

Essential for production buyers because it covers the model ID, adaptive thinking, removed sampling parameters, token-counting changes, task budgets, and high-resolution image implications.

Primary current Google source for Gemini 3.1 Pro, including rollout across consumer, developer, and enterprise surfaces plus its harder-reasoning positioning.

Matters because Google's enterprise story is shaped by Gemini pricing, grounding charges, and routing options on Vertex.

Adds market context: ChatGPT still leads the consumer surface, Gemini has distribution pressure, and Claude is gaining traction rather than winning mass share.

Relevant systems reference on why model choice has to be evaluated alongside retrieval, policy checks, routing, observability, and business workflow design.